Key Points:

- The ideas in Claude Shannon’s published papers helped jumpstart the fields of information theory and digital communications.

- During WWII, Shannon worked at Bell Labs contributing to secret wartime projects like cryptography, control systems for anti-aircraft missiles, and building the secure communications system that let Churchill and Roosevelt coordinate their wartime response.

- He spent his later decades working in MIT’s Research Laboratory of Electronics, becoming a permanent faculty member. In 1978, he was awarded the status of professor emeritus.

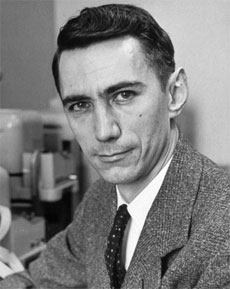

Who Is Claude Shannon?

Claude Shannon was an American computer scientist, engineer, and mathematician. The ideas in his published papers helped jumpstart the fields of information theory and digital communications, paving the way for all the networks of electronic communications around the world that we rely on to make the Digital Age possible.

Early life

The son of a teacher and an entrepreneur, Claude Elwood Shannon relayed his first bits of information as a crying baby on April 30, 1916, in the small town of Petoskey, Michigan. He went to both primary and secondary school in the nearby city of Gaylord and then attended the University of Michigan, where he completed two bachelor’s degrees in mathematics and electrical engineering.

Quick Facts

- Full Name

- Claude Shannon

- Birth

- April 30, 1916

- Death

- February 24, 2001

- Net Worth

- NA

- Awards

- Hhonorary degrees from Yale, Michigan, Princeton, Edinburgh, Pittsburgh, Northwestern, Oxford, East Anglia, Carnegie-Mellon, Tufts and the University of Pennsylvania

- National Medal of Science

- Stuart Ballantine Medal

- Harvey Prize

- Harold Pender Award

- John Fritz Medal

- Kyoto Prize

- Shannon Award

- Children

- Robert, Andrew and Margarita

- Nationality

- American

- Place of Birth

- Petoskey, Michigan

- Fields of Expertise

- [“Mathematics”,”Computer Science”,”Electrical Engineering”,”communications”,”cryptography”]

- Institutions

- University of Michigan, Bell Labs and MIT

- Contributions

- Information theory and digital circuit design

After he graduated, Shannon went on to perform his graduate studies at the Massachusetts Institute of Technology (MIT). He had the opportunity to work alongside one of the most prolific computer experts of the time, Dr. Vannevar Bush. The computers they worked with were simple analog calculating machines Bush called “differential analyzers.” Shannon helped Bush program differential equations on these machines, which specialized in solving calculus problems via a complex system of gears, shafts, and wheels.

While at MIT’s Department of Mathematics, Shannon wrote his award-winning master’s thesis, “A Symbolic Analysis of Relay and Switching Circuits.” It was the first to propose a system that used relay-switching circuits to work with Boolean logic. This turned the design of digital circuits into a useful science with effective rules that still govern the manufacture of today’s chips and circuits.

In 1937, Shannon traveled to New York to do a summer internship at Bell Labs. He would later return to work there for most of his career.

In 1940, he finished his master’s degree in electrical engineering as well as his doctoral degree in mathematics, both from MIT. He then spent a year in Princeton, New Jersey, at the Institute for Advanced Study, where he became a National Research Fellow.

Career

Bell Labs

In 1941, Shannon was hired by the mathematics department at Bell Labs, where he’d done his summer internship a few years prior. He would end up working there for 15 years and remained affiliated with Bell Labs for 31 years.

World War II was in full swing at the time, and Shannon started his career at Bell Labs making important contributions to secret wartime projects like cryptography and control systems for anti-aircraft missiles. He even helped build the secure communications system that let Churchill and Roosevelt coordinate their wartime response. Although it was classified at the time, Shannon’s research would later revolutionize the science of cryptography.

A few years after joining Bell Labs, as the war wound down, Shannon published the paper that would change the world. Titled “A Mathematical Theory of Communication,” it revealed that any kind of communication could be boiled down and transmitted via any digital medium using a basic binary digit system of 1s and 0s. This made popular the computer language of bits used by all digital systems in the modern world.

Besides his serious research and important contributions, Shannon also loved to play. During his final years working at Bell Labs, he channeled his attention into creating a menagerie of mechanical toys. These included an automatic Rubik’s Cube solver, a juggling machine, a rocket-powered Frisbee, and a motorized Pogo stick.

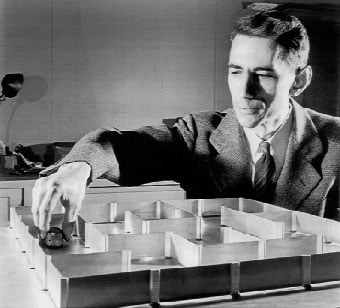

He also worked on a plethora of clever devices that seemed like they could almost think for themselves. These were early precursors to today’s machine-learning behemoths, including a computer-based chess engine, a mind-reading device, and a robotic mouse capable of learning to solve a maze.

MIT

In 1956, Shannon accepted a position at MIT as a visiting professor. He would spend his later decades working in MIT’s Research Laboratory of Electronics. Two years later, he moved in, becoming a permanent faculty member. In 1978, he was awarded the status of professor emeritus.

What Did Claude Shannon Invent?

Digital Circuits

Shannon’s 1937 master’s thesis, “A Symbolic Analysis of Relay and Switching Circuits,” started with the discovery that you could simplify the convoluted electromechanical relays of the telephone routing switches of the time by using a system of binary arithmetic and Boolean algebra.

He then flipped the concept on its head, showing that you could use binary electrical switches, which only turned on and off, to solve any logical problem. These simple ideas established the theory behind the digital circuit design that we still use in all our electronic computing devices today. Shannon’s paper is still considered one of the most significant contributions of the 20th century.

Information Theory

Shannon’s most consequential contribution to the Digital Age was his 1948 paper, “A Mathematical Theory of Communication.” The paper focused on answering two pressing questions of communication theory at the time:

- How to most efficiently encode any message in a noise-free environment

- How to reliably transmit the message in the presence of noise

To answer the first question, Shannon argued that any information imaginable can be quantified in the same way, regardless of the medium of communication. At the time, most people thought that communication required sending electromagnetic waves through a wire. Shannon discovered that you could use a system of 1s and 0s to encode the actual information content of any message.

That meant that no matter whether the message was written or spoken language, pictures, or videos, you could convert it into a string of binary digits or “bits.” Sending those 1s and 0s would then be much easier. This theory is what allows us to digitize anything from individual quotes and photos to entire books, songs, and movies.

To answer the second question, Shannon demonstrated that you could deliver this digitized information over communication channels containing noise by using probability theory to quantify the amount of noise and then using redundancy to eliminate it. He borrowed the concept of entropy, which is used in the field of thermodynamics to measure disorder in the physical world, to measure how much noise was in any given communication system.

The brilliance of these two solutions was that they were abstract enough to be applied to many different models of information and communication. Today, besides their use in information retrieval, Shannon’s ideas have been found useful in diverse areas like speech analytics, handwriting recognition, and other machine learning models.

Thinking Machines

In the last half of his life, Shannon became fascinated with artificial intelligence. He made a couple of important contributions to the field, including designing a few machines that could learn and react to their environment.

In 1950, he designed an electronic mouse that he programmed to solve mazes. The mouse was a magnetic creature with a control module made from a relay circuit. He named it Theseus.

Shannon created a system of mazes for his mouse that he could modify at will. He designed the mouse’s relay circuit brain to travel through the corridors of his mazes until it discovered the exit.

Once a given maze had been plotted, he could put the mouse in any location it had been before, and it would use its prior experience to immediately plot a path to the exit. If he put it in a location it hadn’t visited, it would continue its search for the exit and add the new pathways to its memory banks in an early form of machine learning. Theseus was one of the first human-made learning devices.

That same year, Shannon wrote a trailblazing paper titled “Programming a Computer for Playing Chess.” It laid the foundations for the first full human versus computer chess game played in 1956 by the Los Alamos MANIAC machine.

The paper estimated that the number of possible chess games was somewhere around 10 to the 120th power, a number larger than the number of atoms in our universe. This idea, now known as “Shannon’s Number,” implied that a brute-force algorithm wouldn’t be a plausible way of teaching a computer to play chess.

Shannon argued that a strategic algorithm that only considered the best moves in a given position would be the only workable solution. This idea is similar to the way human chess players think. It subsequently formed the basis of the most successful machine-learning chess engines still used today.

Claude Shannon is pictured here with Theseus, the electronic mouse that he programmed to solve mazes. The mouse was a magnetic creature with a control module made from a relay circuit.

©Unknown author / public domain

Claude Shannon: Marriage and Personal Life

Marriage

In 1940, Shannon wedded a rich intellectual named Norma Levor. Their marriage lasted only a year, after which Levor would go on to marry a famous screenwriter and enjoy a successful career in screenwriting.

In 1949, he entered into a second marriage, this time with more success. Shannon met his second wife, Mary Elizabeth Moore while working at Bell Labs, where she worked as a numerical analyst. She would become his primary research collaborator, assisting him in creating some of his most inventive devices.

Children

Claude and Mary Shannon had three children — two sons and a daughter. Their names were Robert Shannon, Andrew Moore Shannon, and Margarita Shannon.

Claude Shannon: Awards and Achievements

Honorary Degrees

Throughout his life, Shannon was awarded honorary degrees from some of the most important universities and institutions. These included Yale, Princeton, Edinburgh, Oxford, Carnegie-Mellon, Michigan, Pittsburgh, Northwestern, East Anglia, Tufts and the University of Pennsylvania.

Shannon Award

In 1973, he became the first to receive an award created in his honor. The Shannon Award was instituted by the Institute of Electrical and Electronics Engineers (IEEE) to highlight people who have made profound and consistent contributions to information theory and electrical engineering. To this day, it’s still the highest honor in the field of information theory that Shannon invented.

Kyoto Prize

In 1985, he was given the very first Kyoto Prize to honor his invention of information theory. Known as the Japanese Nobel Prize, the Kyoto Prize awards people who have contributed significantly toward progress in science, technology, art, or philosophy.

Other Awards

Shannon earned many awards during his life as well as posthumously. Some of the most important ones include:

- National Medal of Science

- Stuart Ballantine Medal

- Harold Pender Award

- John Fritz Medal

- Harvey Prize, of which he was the first recipient

Claude Shannon: Published Works and Books

Collected Papers

Shannon compiled all his papers into book form and published them in 1993 under the title “Collected Papers.” The book is composed of 127 of Shannon’s publications discussing technical subjects like computing, communications, and artificial intelligence as well as offbeat topics like mind-reading machines and juggling. It contains his most important papers, including “The Mathematical Theory of Communication.”

Posthumous Documentary: The Bit Player

After his death, the IEEE Foundation funded a documentary about Shannon’s life and contributions. It mixed archival films and new interviews with entertaining animations to tell a story of the inventor who changed the world without ever losing his whimsical sense of curiosity. “The Bit Player” was the first documentary made by the IEEE.

Claude Shannon Quotes

- “Information is the resolution of uncertainty.”

- “We know the past but cannot control it. We control the future but cannot know it.”

The image featured at the top of this post is ©Unknown author / public domain