It was Gordon Moore — co-founder and chairman emeritus of computer giant Intel — who suggested that computers doubled in complexity every two years. If the 1950s saw computers entering the workforce and the 1960s saw computers becoming smaller, more accessible, more affordable, and more capable, then you can probably only imagine the kind of advancements that took place throughout the 1970s. As a matter of fact, going by Moore’s hypothesis, computers were ten times as complex at the start of the 1970s than they were at the start of the 1960s.

From the continued advancements in features, design, speed, technology, storage, and programming language to the ever-broadening use of personal computers, home computers, and work computers, the 1970s were a truly prolific decade for this kind of technology — especially as the third generation gave way to the fourth and computers began to reach their full potential.

Continue reading to learn the facts about the history of computers in the 1970s — including facts on their features, design, speed, storage, price, uses, and more.

1970

Intel Debuts Dynamic RAM

Founded in 1968, Intel quickly became a global force in the computing industry in the years that followed. They proved this in 1970 with the release of the 1103, the world’s first dynamic RAM chip. Equipped with a capacity of 1024 bits (or one Kbit), it effectively put Intel on the map in a major way, sending companies like IBM scrambling to compete.

1971

Up Close and Personal

While the 1970s would eventually be defined by home computers and personal computers, it was still a novel concept at this point in 1971. Several brands put out their unique personal computers — such as the Kenbak-1, the CTC Datapoint 2200, and the HP 9800 — but it’s hard to say which of these brands was the very first to break this new ground. Each came with its own set of pros and cons, but at the end of the day, if you were lucky enough to own a product from one of these personal computer brands, you were probably more than willing to overlook these pros and cons in favor of the awesome features, the sleek design, the fast speed, and all the other advancements.

Intel Debuts the First Commercial Microprocessor

Only a year after bursting onto the scene with the first dynamic RAM, Intel followed up with the release of the first microprocessor to be commercially available for home computers. A 4-bit processor equipped with an equivalence to 2,300 transistors, the product — the Intel 4004 — could comprehend nearly 60,000 instructions a second and clocked a maximum speed of 740 kHz.

Email Booms

Sometimes, an idea is just way too ahead of its time to truly catch on. Other times, it catches on instantly and stays big. This latter scenario was the case with Ray Tomlinson’s development of the email. Using ARPANET, Tomlinson was able to send messages back and forth between two different computers — something that had never been seen before outside of two users logged onto the same computer. The email was an instant hit and hasn’t gone away since.

Start the Presses

While the company’s copy machine had only been developed just a few years earlier, researchers at Xerox PARC had identified a completely new way to create an image in 1971: by using a laser. Unlike the way Tomlinson’s email idea was treated, the executives at Xerox PARC weren’t interested when the copy machine was doing so well as it was. Realizing the potential of their idea, physicist Gary Starkweather and colleagues decided to just make their laser printer on their own — away from the people who had told them no. Their invention was an instant hit, and Xerox owed Starkweather and crew a huge apology.

1972

Let the Games Begin

The year 1972 saw the founding of Atari and the release of Pong, two major steps toward the massive success of video games that awaited the world just a few years from this point in history.

Welcome to the New Age

While the exact starting point of the fourth generation of computer history isn’t specified, many experts on the subject consider 1972 and beyond the official benchmark. However, it’s less about the time and more about the technology: Anything that utilizes Large Scale Integration (or LSI) of circuits or microprocessors is considered a fourth-generation computer. Typically, fourth-gen technology has anywhere from 500 to 10,000 components on a chip.

1973

Honey, I Shrunk the Computer

Even though supercomputers were still a sought-after technology, the early ‘70s saw a growing interest in microcomputers. (After all, it was only inevitable after the invention of the microprocessor and the push for smaller mainframes.) In 1973, the Micral N emerged — developed by the French company R2E, this product was officially referred to as the first microcomputer to be marketed to the world.

Logging On

From the development of the TCP/IP protocol suite to the invention of Ethernet, 1973 was the most important year for the future of the Internet thus far. Whether it was France’s CYCLADES, Europe’s EIN, Britain’s NPL, or the pre-existing ARPANET, TCP/IP and Ethernet allowed these networks to link with other networks, creating an internetwork — a.k.a. the Internet.

1974

The Arrival of the Alto

Many think of their name as printers and scanners, but here are the facts: Xerox PARC is also hugely responsible for the computer as we know it today. Equipped with a mouse, a local network of other Altos, a windows-based graphical interface, and the ability to share and print out files, Xerox’s computer — the Alto, released to the public in 1974 — effectively set the stage for Apple’s Lisa and Mac computers.

1975

Get a Hobby

For those who had an interest in learning how computers worked, the MITS Altair 8800 allowed hobbyists to order a computer kit and assemble the unit itself. While these hobby computers were extremely limited in programming language, storage, and uses, their low price and their do-it-yourself appeal made them a huge success in 1975.

The Formation of Microsoft

Also in 1975, two men by the names of Bill Gates and Paul Allen managed to do something pretty novel: implement the BASIC programming language in a microcomputer (the aforementioned MITS Altair, as a matter of fact). With this seemingly simple action, Gates and Allen had formed Microsoft. The rest, as they say, is history. IBM was no longer the only titan in the game.

1976

Apple Doesn’t Fall Far From the Tree

Less than a year after Bill Gates and Paul Allen formed Microsoft, Steve Wozniak and Steve Jobs came along and founded Apple Computer, Inc. to sell their single-board computer — called the Apple I. IBM now had several major forms of competition.

Supercomputers Endure

Even as more and more companies shifted focus to microcomputers, home computers, and personal computers, there was still a need for supercomputers. In a time when everyone else was going small, the Cray-1 supercomputer’s mainframes were not afraid to go big. Famous for modeling its mainframes in the shape of a horseshoe, this Cray-1 supercomputer made vector processing a reality.

1977

New and Improved Technology

1977 saw several companies taking a good, hard look at their line of products and making major improvements from there. Apple released the Apple II, greatly improving upon its initial design. Intel continued to make its microprocessors better than ever with the release of the Intel 8086, a 16-bit instruction set architecture that remains one of the most successful and widely used microprocessors in the history of personal computers. Radio Shack — through the company Tandy — modified its desktop computer with a new microprocessor, improved memory, video display, and better programming language.

1978

Computers Reach the White House

Despite bankrolling all kinds of computer research and development in the decades prior, the Carter administration was the first presidential administration to install a computer in the White House. Carter and staff received HP 3000s along with a Xerox Alto and an IBM laser printer for the Oval Office.

1979

Dawn of the Discs

While disks with a “k” had been a key component of computer technology for decades, the late 1970s saw discs with a “c” emerge onto the scene. From LaserDiscs to CDs, the invention of these novel memory and storage displays put the idea of tape storage in jeopardy for the first time.

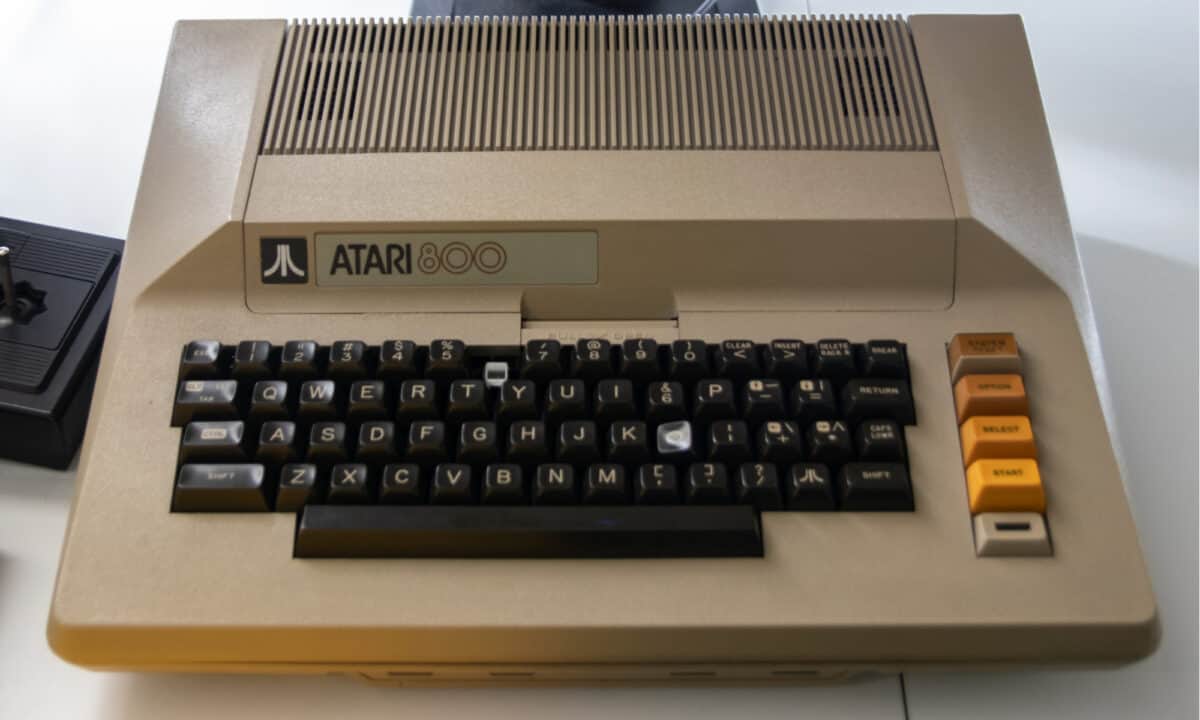

Alone Together

Throughout the 1970s, many devices were aimed at the idea of bringing people and their technology together onto one network. This eventually came to a unique set of pros and cons, though: What if you didn’t want to be connected to others? What if you just wanted to do some work offline? These pros and cons led to many new computers — such as Atari’s Model 400 and Model 800 microcomputers or Texas Instruments’s TI-99/4 microcomputer — coming equipped with both online and offline capabilities.

Hello Moto

In 1979, Motorola released the 68000 microprocessor — the first entry in the 68k series of microprocessors and the eventual key component of many hugely successful Apple and Atari products in the following decade.

Up Next…

For more information on computers throughout the decades, check these out:

- Computers in the 1950s: During this era, computers entered the workforce. Find out what devices were created and what innovations they brought to the tech sector.

- Computers in the 1960s: During this era, computers shrank in size and in cost. Find out what innovations graced the decade in this article.

- Computers in the 1980s: During this era, computers metamorphosed to become faster, in addition to featuring improved design. Find out the one innovation which made it possible as well as the luminaries which transformed the decade and the tech sector.

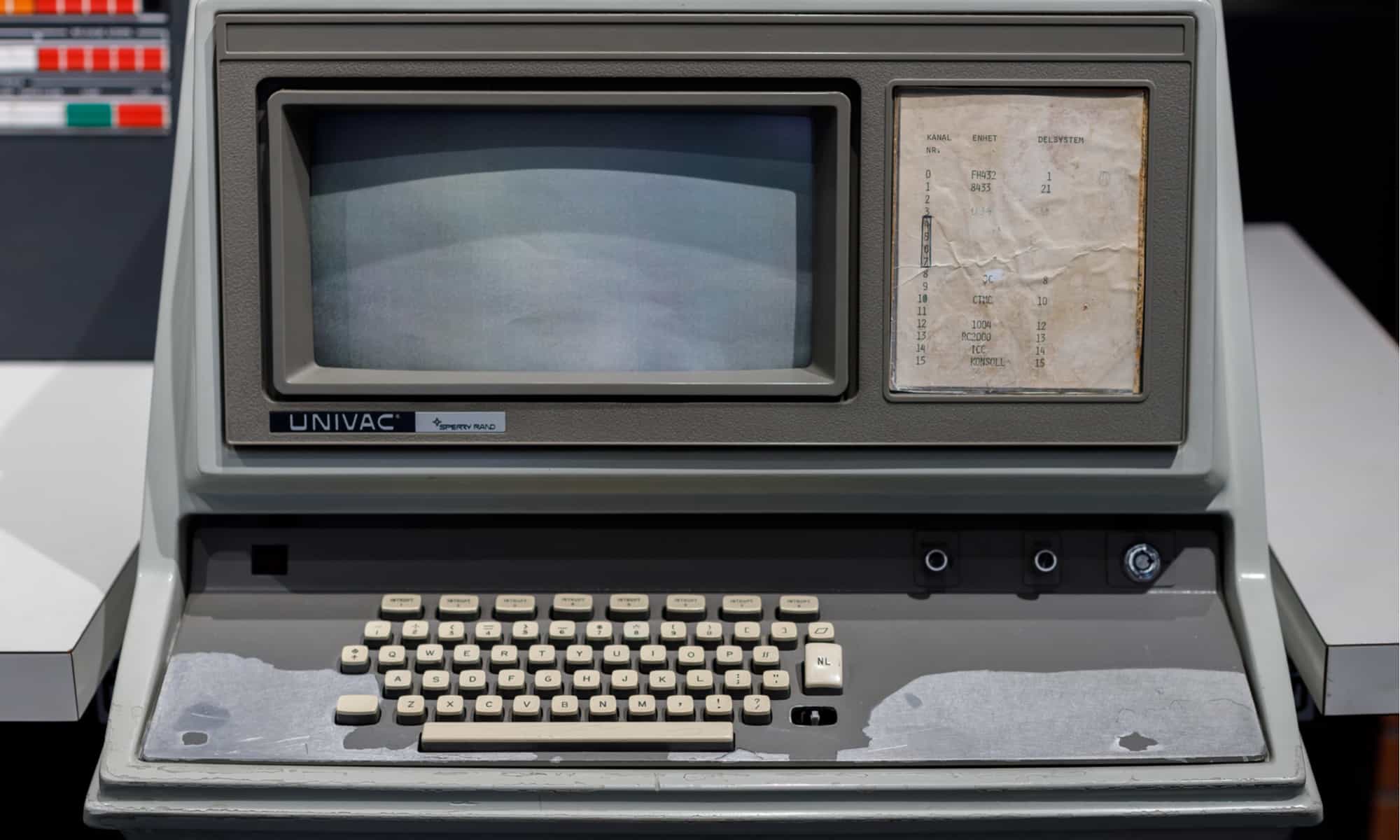

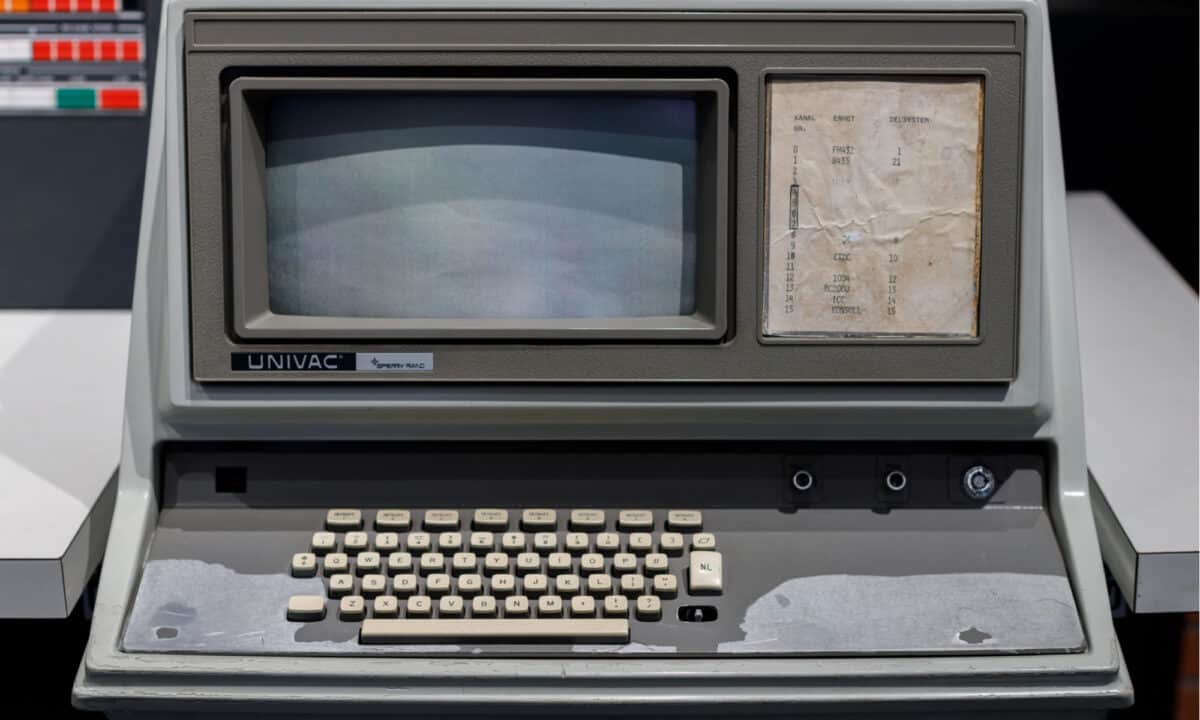

The image featured at the top of this post is ©MAXSHOT.PL/Shutterstock.com.